ISIS 3 Application Documentation

findfeatures | Printer Friendly View | TOC | Home |

Feature-based matching algorithms used to create ISIS control networks

| Overview | Parameters | Example 1 | Example 2 |

DescriptionIntroductionfindfeatures was developed to provide an alternative approach to create image-based ISIS control point networks. Traditional ISIS control networks are typically created using equally spaced grids and area-based image matching (ABM) techniques. Control points are at the center of these grids and they are not necessarily associated with any particular feature or interest point. findfeatures applies feature-based matching (FBM) algorihms using the full suite of OpenCV feature matching frameworks. The points detected by these algorithms are associated with special surface features identified by the type of detector algorithm designed to identify certain charcteristics. Feature based matching has a twenty year history in computer vision and continues to benefit from improvements and advancements to make development of applications like this possible. This application offers alternatives to traditional image matching options such as autoseed, seedgrid and coreg. Applications like coreg and pointreg are area-based matching, findfeatures utilizes feature-based matching techniques. The OpenCV feature matching framework is used extensively in this application to implement the concepts contained in a robust feature matching algorithm applied to overlapping single pairs or multiple overlapping image sets. OverviewFeature based algorithms are comprised of three basic processes: detection of interest points (or keypoints), extraction of interest point descriptors and finally matching of interest point descriptors from two image sources. These three operations are performed with individual algorithms called detectors, extractors and matchers, respectively. These operations alone do not ensure good, high quality points are detected as the result set likely contains many outliers. findfeatures applies a robust outlier detection procedure that works well for many diverse observing conditions that commonly occur during the acquisution (e.g., opposing sun angles, wide range of emission and incidence angeles, etc..). Users can specify unique combinations of detector, extractor and matcher FBM algorithms using the OpenCV API through string specifcations. The string specification of the FBM names the three algorithms and optional parameter values for each using a custom syntax to provide flexibilty. findfeatures is designed to support many different image formats. However, ISIS images with camera models provide the greatest flexibility when using this feature matcher. Level 1 ISIS images with geometry can be effectively and efficiently matched by applying fast geometric transforms that project all overlapping candidate images (referred to as train images in OpenCV terminolgy) to the camera space of the match (or truth) image (referred to as the query image in OpenCV terminology). This single feature allows users to apply virtually all OpenCV detector and extractor, including algorithms that are not scale and rotation invariant. Other popular image formats are supported using OpenCV imread() image reader API. Images supported here can be provided as the image input files. However, these images will not have geometric functionality so support for the fast geometric option is not available to these images. As a consequence, FBM algorithms that are not scale and rotation invarant are not recommended for these images unless they are relatively spatially consistent. Nor can the point geometries be provided - only line/sample coorelations will be computed in these cases. The OpenCV FBM algorithms and parameterization of those algoriithms are selected/provided with a specific string syntax. The string contains a feature detector, descriptor extractor and descriptor matcher algorithm specification with optional parameterization of the robust matcher outlier detection and other processing algorithms. The general form of the FBM specification string is:

detector[@param1:value1@...]/extractor[@param1:value@...][/matcher@param1@value1@...][/parameters@param1:value1@...]

where Outlier detection is implemented as four separate steps and applied to the results of the FBM detector, extractor and matcher algorithms have completed. The four main steps are:

The results of the FBM of all train images to query images will be written to an ISIS control network. The query image is automatically selected as the reference image but geometry can come from the train source for two-pair image matching. It should be noted that the goodness of fit does not follow the ABM ranges of -1 to 1. Instead it is computed from the average of the responsivity of the query and train image keypoints. isisminer can be used to determine overlapping image sets for input into this application for multi-matching FBM processing. cnetcombinept is designed to take all the resulting control networks from systematic regional or global processing with findfeatures. cnetcheck should be used to ensure the combined network is suitable for bundle adjustment and solving for radius using jigsaw. The bundle adjusted control network would now contain adjusted latitude/longitude coordinates and radius values at each control point, If the control network contains sufficient density, cnet2dem can be use to produce an interolated DEM from the control network. And CKs and SPKs can be generated using ckwriter and spkwriter, respectively, to capture the control for general distribution and use. Parameterization of the Matcher

Much of the flexibility in findfeatures is the abilility to

alter parameters for the OpenCV matcher algorithms. Significant

effort has been made to allow the user to change algorithm behaviors.

Not only can users change OpenCV algorithm parameters, but there are

additional parameters available in the robust matcher, particularly

in the outlier detection processing, that can modifed. These

parameters can be very helpful in diagnosing issues with the matching

and outlier aspects of findfeatures. The following table

describes all the parameters available that can be modified through

the

Using Debugging to Diagnose BehaviorAn additional feature of findfeatures is a detaileddebugging report of processing behavior in real time for all matching and outlier detection algorithms. The data produced by this option is very useful to identify the exact processing step where some matching operations may result in failed matching operations. In turn, this will allow users to alter parameters to address these issues to lead to successful matches that would otherwise not be able to achieve. To invoke this option, users set DEBUG=TRUE and provide an optional output file (DEBUGLOG=filename) where the debug data is written. If no file is specified, output defaults to the terminal device. Here is an example (see the example section for details) of a debug session with line numbers added for reference of the description that follows:

1 ---------------------------------------------------

2 Program: findfeatures

3 Version 0.1

4 Revision: $Revision: 6598 $

5 RunTime: 2016-03-15T14:25:18

6 OpenCV_Version: 2.4.6.1

7

8 System Environment...

9 Number available CPUs: 8

10 Number default threads: 8

11 Total threads: 8

12

13 Total Algorithms to Run: 1

14

15 @@ matcher-pair started on 2016-03-15T14:25:19

16

17 +++++++++++++++++++++++++++++

18 Entered RobustMatcher::match(MatchImage &query, MatchImage &trainer)...

19 Specification: surf@hessianThreshold:100/surf/DescriptorMatcher.BFMatcher@normType:4@crossCheck:false

20 ** Query Image: EW0211981114G.lev1.cub

21 FullSize: (1024, 1024)

22 Rendered: (1024, 1024)

23 ** Train Image: EW0242463603G.lev1.cub

24 FullSize: (1024, 1024)

25 Rendered: (1024, 1024)

26 --> Feature detection...

27 Total Query keypoints: 11823 [11823]

28 Total Trainer keypoints: 11989 [11989]

29 Processing Time: 0.476

30 Processing Keypoints/Sec: 50025.2

31 --> Extracting descriptors...

32 Processing Time(s): 0.599

33 Processing Descriptors/Sec: 39752.9

34

35 *Removing outliers from image pairs

36 Entered RobustMatcher::removeOutliers(Mat &query, vector<Mat> &trainer)...

37 --> Matching 2 nearest neighbors for ratio tests..

38 Query, Train Descriptors: 11823, 11989

39 Computing query->train Matches...

40 Total Matches Found: 11823

41 Processing Time: 0.352

42 Matches/second: 33588.1

43 Computing train->query Matches...

44 Total Matches Found: 11989

45 Processing Time: 0.356 <seconds>

46 Matches/second: 33677

47 -Ratio test on query->train matches...

48 Entered RobustMatcher::ratioTest(matches[2]) for 2 NearestNeighbors (NN)...

49 RobustMatcher::Ratio: 0.65

50 Total Input Matches Tested: 11823

51 Total Passing Ratio Tests: 988

52 Total Matches Removed: 10835

53 Total Failing NN Test: 10835

54 Processing Time: 0.001

55 -Ratio test on train->query matches...

56 Entered RobustMatcher::ratioTest(matches[2]) for 2 NearestNeighbors (NN)...

57 RobustMatcher::Ratio: 0.65

58 Total Input Matches Tested: 11989

59 Total Passing Ratio Tests: 1059

60 Total Matches Removed: 10930

61 Total Failing NN Test: 10930

62 Processing Time: 0

63 Entered RobustMatcher::symmetryTest(matches1,matches2,symMatches)...

64 -Running Symmetric Match tests...

65 Total Input Matches1x2 Tested: 988 x 1059

66 Total Passing Symmetric Test: 669

67 Processing Time: 0.012

68 Entered RobustMatcher::computeHomography(keypoints1/2, matches...)...

69 -Running RANSAC Constraints/Homography Matrix...

70 RobustMatcher::HmgTolerance: 1

71 Number Initial Matches: 669

72 Total 1st Inliers Remaining: 273

73 Total 2nd Inliers Remaining: 266

74 Processing Time: 0.05

75 Entered EpiPolar RobustMatcher::ransacTest(matches, keypoints1/2...)...

76 -Running EpiPolar Constraints/Fundamental Matrix...

77 RobustMatcher::EpiTolerance: 1

78 RobustMatcher::EpiConfidence: 0.99

79 Number Initial Matches: 266

80 Inliers on 1st Epipolar: 219

81 Inliers on 2nd Epipolar: 209

82 Total Passing Epipolar: 209

83 Processing Time: 0.011

84 Entered RobustMatcher::computeHomography(keypoints1/2, matches...)...

85 -Running RANSAC Constraints/Homography Matrix...

86 RobustMatcher::HmgTolerance: 1

87 Number Initial Matches: 209

88 Total 1st Inliers Remaining: 197

89 Total 2nd Inliers Remaining: 197

90 Processing Time: 0.001

91 %% match-pair complete in 1.859 seconds!

92

93

In the above example, lines 2-13 provide general information about the program and compute environment. If MAXTHREADS were set to a value less than 8, number of total threads (line 11) would reflect this number. Line 15 specifies the precise time the matcher algorithm was invoked. Line 18-25 shows the algorithm string specification, names of query (MATCH) and train (FROM) images and the full and rendered sizes of images. Lines 27 and 28 show the total number of keypoints or features that were detected by the SURF detector for both the query (11823) and train (11989) images. Lines 31-33 indicate the descriptors of all the feature keypoints are being extracted. Extraction of keypoint descriptors can be costly under some conditions. Users can restrict the number of features detected by using the MAXPOINTS parameter specify the maximum numnber of points to save. The values in brackets in lines 27 and 28 will show the total amount of features rdetected if MAXPOINTS are used. Outlier detection begins at line 35. The Ratio test is performed first. Here the matcher algorithm is invoked for each match pair, irregardless of the number of train (FROMLIST) images provided. For each keypoint in the query image, the two nearest matches in the train image are computed and the results are reported in lines 39-42. Then the bi-directional matches are computed in lines 43-46. A bi-directional ratio test is compited for the query->train matches in lines 47-54 and then train->query in lines 55-62. You can see here that a significant number of matches are removed in this step. Users can adjust this behavior, retaining more points by setting the RATIO parameter closer to 1.0. The symmetry test, ensuring matches from query->train have the same match as train->query, is reported in lines 63-67. In lines 68-74, the homography matrix is computed and outliers are removed where the tolerance exceeds HMGTOLERANCE. Lines 75-83 shows the results of the epipolar fundamental matrix computation and outlier detection. Matching is completed in lines 84-90 which report the final spatial homography computations to produce the final transformation matrix between the query and train images. Line 89 shows the final number of control measures computed between the image pairs. Lines 35-90 are repeated for each query/train image pair (with perhaps slight formatting differences). Line 91 shows the total processing time for the matching process. Evaluation of Matcher Algorithm Performancefindfeatures provides users with many features and options to create unique algorithms that are suitable for many of the diverse image matching conditions that naturally occur during a spacecraft mission. Some are more suited for certain conditions that others. But how does one determine which algorithm combination performs the best for an image pair? By computing standard performance metrics, one can make a determination as to which algorithm performs best. Using the ALGOSPECFILE parameter, users can specify one or more algorithms to apply to a given image matching process. Each algorithm specified, one per line in the input file, results in a the creation of a unique robust matcher algorithm thatis applied to the input files in succession. The performance of each algorithm is computed for each of the matcher from a standard set of metrics described in a thesis titled Efficient matching of robust features for embedded SLAM. From the metrics described in this paper, a single metric that measures the abilities of the whole matching process is computed that are relevant to all three FBM steps: detection, description and matching. This metric is called Efficiency. The Efficiency metric is computed from two other metrics called Repeatability and Recall.

Repeatability represents the ability to detect the same point

in the scene under viewpoint and lighting changes and subject to

noise. The value of Repeatability is calculated as:

Recall represents the ability to find the correct

matches based on the description of detected features, The value of

Recall is calculated as:

Efficiency combines the Repeatability and

Recall. It is defined as:

findfeatures computes the Efficiency for each algorithm and selects the matcher algorithm combination with the highest value. This value is reported at the end of the run of application in the MatchSolution group. Here is an example:

Group = MatchSolution

Efficiency = 0.016662437621585

MinEfficiency = 0.016662437621585

MaxEfficiency = 0.016662437621585

End_Group

CategoriesHistory

|

Parameter GroupsFiles

Algorithms

Constraints

Image Transformation Options

Control

|

Files: FROM

Description

This cube/image (train) will be translated to register to the MATCH (query) cube/image. This application supports other common image formats such as PNG, TIFF or JPEG. Essentially any image that can be read by OpenCV's imread()routine is supported.

| Type | cube |

|---|---|

| File Mode | input |

| Default | None |

| Filter | *.cub |

Files: FROMLIST

Description

Use this parameter to select a filename which contains a list of cube filenames. The cubes identified inside this file will be used to create the control network. The following is an example of the contents of a typical FROMLIST file:

AS15-M-0582_16b.cub

AS15-M-0583_16b.cub

AS15-M-0584_16b.cub

AS15-M-0585_16b.cub

AS15-M-0586_16b.cub

AS15-M-0587_16b.cub

Each file name in a FROMLIST file should be on a separate line.

| Type | filename |

|---|---|

| File Mode | input |

| Internal Default | None |

| Filter | *.lis |

Files: MATCH

Description

Name of the image to match to. This will be the reference image in the output control network. It is also referred to as the query image in OpenCV documentation.

| Type | cube |

|---|---|

| File Mode | input |

| Default | None |

| Filter | *.cub |

Files: ONET

Description

This file will contain the Control Point network results of findfeatures in a binary format. There will be no false or failed matches in the output control network file.

| Type | filename |

|---|---|

| File Mode | output |

| Internal Default | None |

| Filter | *.net *.txt |

Files: TOLIST

Description

This file will contain the list of (cube) files in the control network. For multi-image matching, some files may not have matches detected. These files will not be written to TOLIST. The MATCH file is always added first and all other images that have matches are added to TOLIST.

| Type | filename |

|---|---|

| File Mode | output |

| Internal Default | None |

| Filter | *.lis |

Files: TONOTMATCHED

Description

This file will contain the list of (cube) files that were not successfully matched. This can be used to run through individually with more specifically tailored matcher algorithm specifications.

NOTE this file is appended to so that continual runs will accumulate failures making it easier to handle failed runs.

| Type | filename |

|---|---|

| File Mode | output |

| Internal Default | None |

| Filter | *.lis |

Algorithms: ALGORITHM

Description

This parameter provides user control over selecting a wide variety of feature detectors, extractors and matcher combinations. This parameter also provides a mechanism to set any of the valid parameters of the algoritms.

| Type | string |

|---|---|

| Internal Default | None |

Algorithms: ALGOSPECFILE

Description

To accomodate a potentially large set of feature algorithms, you can provide them in a file. This format is the same as the ALGORITHM format, but each unique algorithm must be specifed on a seperate line. Thoeretically, the number you specify is unlimited. This option is particularly useful to generate a series of algorithms that vary parameters for any of the elements of the feature algorithm.

| Type | filename |

|---|---|

| Internal Default | None |

| Filter | *.lis |

Algorithms: LISTALL

Description

This parameter will retrieve all the registered OpenCV algorithms available that can created by name. The ones pertinent to this application are those prepended with "Feature2D" and "DescriptorMatcher". However, this option lists all of the registered OpenCV algorithms.

| Type | boolean |

|---|---|

| Default | No |

Algorithms: LISTMATCHERS

Description

This parameter will retrieve all registered OpenCV feature matcher related algorithms available that can created by name. These algorithms will have "Feature2D" and "DescriptorMatcher" prepended to the name of the algorithm. Many of the Detector and Extractor algorithms are not registered so they will not appear in this list. This option will create a PVL structure of individual algorithms and all their parameters.

| Type | boolean |

|---|---|

| Default | No |

Algorithms: LISTSPEC

Description

This parameter will retrieve all the registered OpenCV algorithms available that can created by name. The ones pertinent to this application are those prepended with "Feature2D" and "DescriptorMatcher". However, this option lists all of the registered OpenCV algorithms.

| Type | boolean |

|---|---|

| Default | No |

Algorithms: TOINFO

Description

When an information option is requested (LISTALL or LISTSPEC), the user can provide the name of an output file here where the information, in the form of a PVL structure, will be written. If those any of those options are selected by the user, and a file is not provided in this option, the output is written to the screen or GUI.

One very nifty option that works well is to specify the

terminal device as the output file. This will list the

results to the screen so that your input can be quickly

checked for accuracy. Here is an example using the algorithm

listing option and the result:

findfeatures listspec=true

algorithm=detector.SimpleBlob@minrepeatability:1/orb

toinfo=/dev/tty

Object = FeatureAlgorithms

Object = FeatureAlgorithm

Name = detector.SimpleBlob@minrepeatability:1/orb/DescriptorMatc-

her.BFMatcher@normType:6@crossCheck:false

OpenCVVersion = 2.4.6

Specification = detector.SimpleBlob@minrepeatability:1/orb/DescriptorMatc-

her.BFMatcher@normType:6@crossCheck:false

Object = Algorithm

Type = Detector

Name = Feature2D.SimpleBlob

blobColor = 0

filterByArea = Yes

filterByCircularity = No

filterByColor = Yes

filterByConvexity = Yes

filterByInertia = Yes

maxArea = 5000.0

maxCircularity = 3.40282346638529e+38

maxConvexity = 3.40282346638529e+38

maxInertiaRatio = 3.40282346638529e+38

maxThreshold = 220.0

minDistBetweenBlobs = 10.0

minRepeatability = 1

minThreshold = 50.0

thresholdStep = 10.0

End_Object

Object = Algorithm

Type = Extractor

Name = Feature2D.ORB

WTA_K = 2

edgeThreshold = 31

firstLevel = 0

nFeatures = 500

nLevels = 8

patchSize = 31

scaleFactor = 1.2000000476837

scoreType = 0

End_Object

Object = Algorithm

Type = Matcher

Name = DescriptorMatcher.BFMatcher

crossCheck = No

normType = 6

End_Object

End_Object

End_Object

End

| Type | filename |

|---|---|

| Default | /dev/tty |

Algorithms: DEBUG

Description

At times, things go wrong. By setting DEBUG=TRUE, information is printed as elements of the matching algorithm are executed. This option is very helpful to monitor the entire matching and outlier detection processing to determine where adjustments in the parameters can be made to produce better results.

| Type | boolean |

|---|---|

| Default | false |

Algorithms: DEBUGLOG

Description

Provide a file that will have all the debugging content appended as it is generated in the processing steps. This file can be very useful to determine, for example, where in the matching and or outlier detection most of the matches are being rejected. The output can be lengthy and detailed, but is critical in the determination where adjustments to the parameters can be made to provide better results.

| Type | filename |

|---|---|

| Internal Default | None |

| Filter | *.log |

Algorithms: PARAMETERS

Description

This file can contain specialized parameters that will modify certain behaviors in the robust matcher algorithm. They can vary over time and are documented in the application descriptions.

| Type | filename |

|---|---|

| Internal Default | None |

| Filter | *.conf |

Algorithms: MAXPOINTS

Description

Specifies the maximum number of keypoints to save in the detection phase. If a value is not provided for this parameter, there will be no restriction set on the number of keypoints that will be used to match. If specified, then approximately MAXPOINTS keypoints with the highest/best detector response values are retained and passed on to the extractor and matcher algorithms. This parameter is useful for detectors that produce a high number of features. A large number of features will cause the matching phase and outlier detection to become costly and inefficient.

| Type | integer |

|---|---|

| Internal Default | 0 |

Constraints: RATIO

Description

For each feature point, we have two candidate matches in the other view. These are the two best ones based on the distance between their descriptors. If this measured distance is very low for the best match, and much larger for the second best match, we can safely accept the first match as a good one since it is unambiguously the best choice. Reciprocally, if the two best matches are relatively close in distance, then there exists a possibility that we make an error if we select one or the other. In this case, we should reject both matches. Here, we perform this test by verifying that the ratio of the distance of the best match over the distance of the second best match is not greater than a given RATIO threshold. Most of the matches will be removed by this test. The farther from 1.0, the more matches will be rejected.

| Type | double |

|---|---|

| Internal Default | 0.65 |

Constraints: EPITOLERANCE

Description

The tolerance specifies the maximum distance in pixels that feature may deviate from the Epipolar lines for each matching feature.

| Type | double |

|---|---|

| Internal Default | 3.0 |

Constraints: EPICONFIDENCE

Description

This parameter indicates the confidence level of the epipolar determination ratio. A value of 1.0 requires that all pixels be valid in the epipolar computation.

| Type | double |

|---|---|

| Internal Default | 0.99 |

Constraints: HMGTOLERANCE

Description

if we consider the special case where two views of a scene are separated by a pure rotation, then it can be observed that the fourth column of the extrinsic matrix will be made of all 0s (that is, translation is null). As a result, the projective relation in this special case becomes a 3x3 matrix. This matrix is called a homography and it implies that, under special circumstances (here, a pure rotation), the image of a point in one view is related to the image of the same point in another by a linear relation.

The parameter is used as a tolerance in the computation of the distance between keypoints using the homography matrix relationship between the MATCH image and each FROM/FROMLIST image. This will throw points out that are (dist > TOLERANCE * min_dist), the smallest distance between points.

| Type | double |

|---|---|

| Internal Default | 3.0 |

Constraints: MAXTHREADS

Description

This parameter allows users to control the number of threads to use for image matching. A default is to use all available threads on system. If MAXTHREADS is specified, the maximum number of CPUs are used if it exceeds the number of CPUs physically available on the system or no more than MAXTHREADS will be used.

| Type | integer |

|---|---|

| Internal Default | 0 |

Image Transformation Options: FASTGEOM

Description

When TRUE, this option will perform a fast geometric linear transformation that projects each FROM/FROMLIST image to the camera space of the MATCH image. Note this option theoretically is not needed for scale/rotation invariant feature matchers such as SIFT and SURF but there are limitations as to the invariance of these matchers. For matchers that are not scale and rotation invariant, this (or something like it) will be required to orient each images to similar spatial consistency. Users should determine the capabilities of the matchers used.

| Type | boolean |

|---|---|

| Default | false |

Image Transformation Options: FASTGEOMPOINTS

Description

| Type | integer |

|---|---|

| Internal Default | 25 |

Image Transformation Options: GEOMTYPE

Description

Provide options as to how FASTGEOM projects data in the FROM (train) image to the MATCH (query) image space.

| Type | string | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Default | CAMERA | ||||||||||||

| Option List: |

|

Image Transformation Options: FILTER

Description

Apply an image filter to both images before matching. These filters are typically used in cases of low emission or incidence angles are present. They are intended to remove albedo and highlight edges and are well-suited for these types of feature detectors.

| Type | string | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Default | None | ||||||||||||

| Option List: |

|

Control: NETWORKID

Description

The ID or name of this particular control network. This string will be added to the ouput control network file, and can be used to identify the network.

| Type | string |

|---|---|

| Default | Features |

Control: POINTID

Description

This string will be used to create unique IDs for each control point created by this program. The string must contain a single series of question marks ("?"). For example: "VallesMarineris????"

The question marks will be replaced with a number beginning with zero and incremented by one each time a new control point is created. The example above would cause the first control point to have an ID of "VallesMarineris0000", the second ID would be "VallesMarineris0001" and so on. The maximum number of new control points for this example would be 10000 with the final ID being "VallesMarineris9999".

Note: Make sure there are enough "?"s for all the control points that might be created during this run. If all the possible point IDs are exausted the program will exit with an error, and will not produce an output control network file. The number of control points created depends on the size and quantity of image overlaps and the density of control points as defined by the DEFFILE parameter.

Examples of POINTID:

- POINTID="JohnDoe?????"

- POINTID="Quad1_????>

- POINTID="JD_???_test1"

| Type | string |

|---|---|

| Default | FeatureId_????? |

Control: POINTINDEX

Description

This parameter can be used to specify the starting POINTID index number to assist in the creation of unique control point identifiers. Users must determine the highest used index and use the next number in the sequence to provide unique point ids.

| Type | integer |

|---|---|

| Default | 1 |

Control: DESCRIPTION

Description

A text description of the contents of the output control network. The text can contain anything the user wants. For example it could be used to describe the area of interest the control network is being made for.

| Type | string |

|---|---|

| Default | Find features in image pairs or list |

Control: NETTYPE

Description

There are two types of control network files that can be created in this application: IMAGE and GROUND. For IMAGE types, the pair is assumed to be overlapping pairs where control measures for both images are crate. For NETTYPE=GROUND, only the FROM file measure is recorded for purposes of dead reckoning of the image using jigsaw.

| Type | string | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Default | IMAGE | |||||||||

| Option List: |

|

Control: GEOMSOURCE

Description

For input files that provide geometry, specify which one provides the latitude/longitude values for each control point. NONE is an acceptable option for which there is no geometry available. Otherwise, the user must choose FROM or MATCH as the cube file that wil provide geometry.

| Type | string | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Default | MATCH | ||||||||||||

| Option List: |

|

Control: TARGET

Description

This parameter is optional and not neccessary if using Level 1 ISIS cube files. It specifies the name of the target body that the input images are acquired of. If the input images are ISIS images, this value is retrieved from the camera model or map projection.

| Type | string |

|---|---|

| Internal Default | None |

Example 1Run matcher on pair of MESSENGER images Description

This example shows the results of matching two overlapping messenger

images of different scales. The following command was used to

produce the output network:

findfeatures algorithm="surf@hessianThreshold:100/surf" \

match=EW0211981114G.lev1.cub \

from=EW0242463603G.lev1.cub \

epitolerance=1.0 ratio=0.650 hmgtolerance=1.0 \

networkid="EW0211981114G_EW0242463603G" \

pointid="EW0211981114G_?????" \

onet=EW0211981114G.net \

description="Test MESSENGER pair" debug=true \

debuglog=EW0211981114G.log

Note that the fast geom option is not used for this example because

the SURF algorithm is scale and rotation invariant. Here is the

algorithm information for the specification of the matcher

parameters:

Object = FeatureAlgorithms

Object = FeatureAlgorithm

Name = surf@hessianThreshold:100/surf/DescriptorMatcher.BFMatche-

r@normType:4@crossCheck:false

OpenCVVersion = 2.4.6.1

Specification = surf@hessianThreshold:100/surf/DescriptorMatcher.BFMatche-

r@normType:4@crossCheck:false

Object = Algorithm

Type = Detector

Name = Feature2D.SURF

extended = No

hessianThreshold = 100.0

nOctaveLayers = 3

nOctaves = 4

upright = No

End_Object

Object = Algorithm

Type = Extractor

Name = Feature2D.SURF

extended = No

hessianThreshold = 100.0

nOctaveLayers = 3

nOctaves = 4

upright = No

End_Object

Object = Algorithm

Type = Matcher

Name = DescriptorMatcher.BFMatcher

crossCheck = No

normType = 4

End_Object

End_Object

End_Object

End

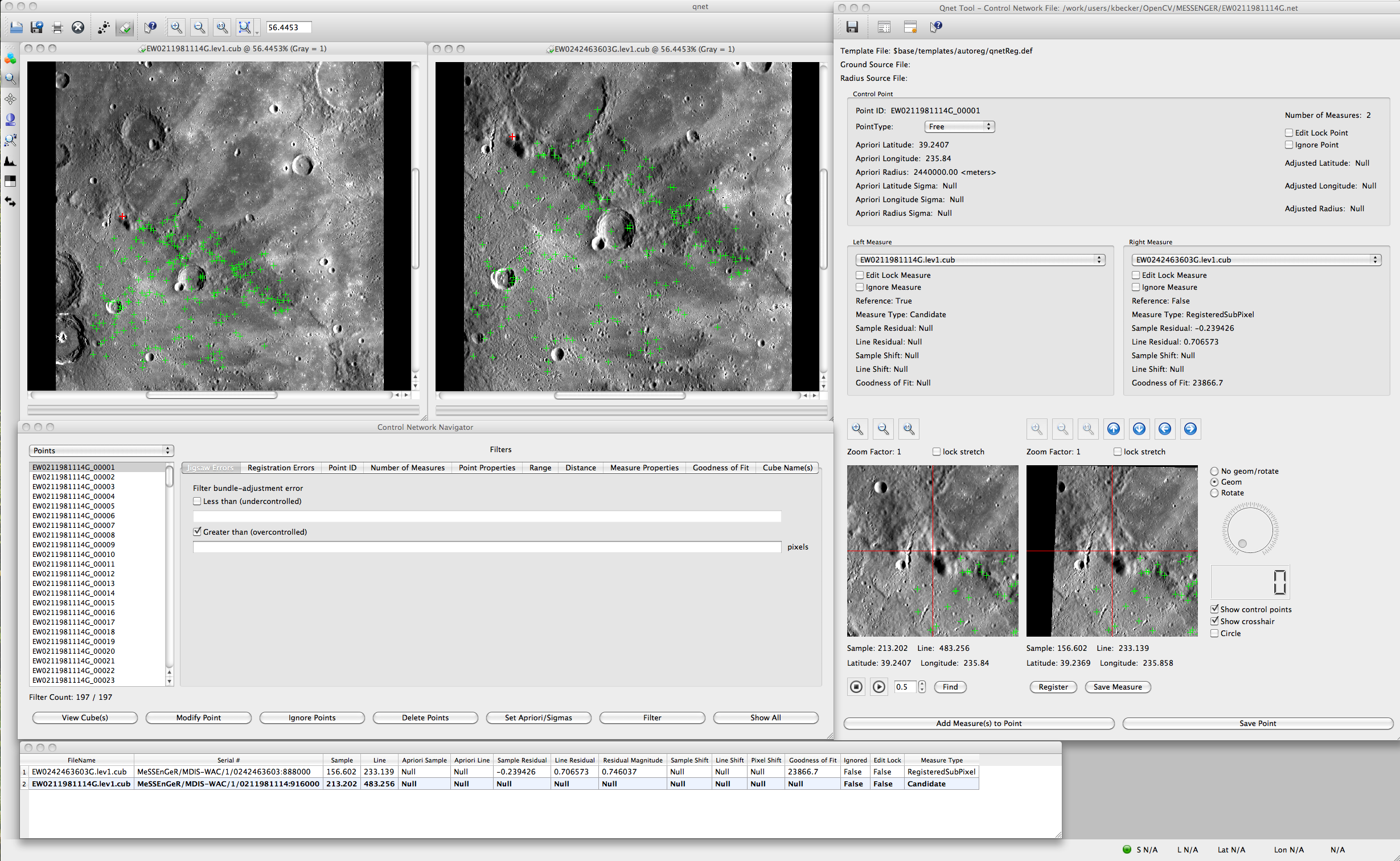

The output debug log file and a line-by-line description of the result is shown in the main application documention. And here is the screen shot of qnet for the resulting network:

|

Example 2Show all the available algorithms and their default parameters Description

This provides a reference for all the current algorithms and their

default parameters. This list may not include all the available

OpenCV algorithms

nor may all algorithms be applicable. Users should rerun this

command to get the current options available on your system as they

may differ.

findfeatures listall=true

Object = Algorithms

OpenCVVersion = 2.4.6.1

Object = Algorithm

Name = BackgroundSubtractor.GMG

backgroundPrior = 0.8

decisionThreshold = 0.8

initializationFrames = 120

learningRate = 0.025

maxFeatures = 64

quantizationLevels = 16

smoothingRadius = 7

updateBackgroundModel = Yes

End_Object

Object = Algorithm

Name = BackgroundSubtractor.MOG

backgroundRatio = 0.7

history = 200

nmixtures = 5

noiseSigma = 15.0

End_Object

Object = Algorithm

Name = BackgroundSubtractor.MOG2

backgroundRatio = 0.89999997615814

detectShadows = Yes

fCT = 0.050000000745058

fTau = 0.5

fVarInit = 15.0

fVarMax = 75.0

fVarMin = 4.0

history = 500

nShadowDetection = 127

nmixtures = 5

varThreshold = 16.0

varThresholdGen = 9.0

End_Object

Object = Algorithm

Name = CLAHE

clipLimit = 40.0

tilesX = 8

tilesY = 8

End_Object

Object = Algorithm

Name = DenseOpticalFlow.DualTVL1

epsilon = 0.01

iterations = 300

lambda = 0.15

nscales = 5

tau = 0.25

theta = 0.3

useInitialFlow = No

warps = 5

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.Brox_GPU

alpha = 0.19699999690056

gamma = 50.0

innerIterations = 10

outerIterations = 77

scaleFactor = 0.80000001192093

solverIterations = 10

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.DualTVL1

epsilon = 0.01

iterations = 300

lambda = 0.15

nscales = 5

tau = 0.25

theta = 0.3

useInitialFlow = No

warps = 5

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.DualTVL1_GPU

Error = "/usgs/pkgs/local/v002/src/opencv/opencv-2.4.6.1/release/modules/-

gpu/precomp.hpp:137: error: (-216) The library is compiled without

GPU support in function throw_nogpu"

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.Farneback

flags = 0

numIters = 10

numLevels = 5

polyN = 5

polySigma = 1.1

pyrScale = 0.5

winSize = 13

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.Farneback_GPU

Error = "/usgs/pkgs/local/v002/src/opencv/opencv-2.4.6.1/modules/core/src-

/gpumat.cpp:109: error: (-216) The library is compiled without

CUDA support in function getDevice"

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.PyrLK_GPU

Error = "/usgs/pkgs/local/v002/src/opencv/opencv-2.4.6.1/release/modules/-

gpu/precomp.hpp:137: error: (-216) The library is compiled without

GPU support in function throw_nogpu"

End_Object

Object = Algorithm

Name = DenseOpticalFlowExt.Simple

averagingBlockSize = 2

layers = 3

maxFlow = 4

occThr = 0.35

postProcessWindow = 18

sigmaColor = 25.5

sigmaColorFix = 25.5

sigmaDist = 4.1

sigmaDistFix = 55.0

speedUpThr = 10.0

upscaleAveragingRadius = 18

upscaleSigmaColor = 25.5

upscaleSigmaDist = 55.0

End_Object

Object = Algorithm

Name = DescriptorMatcher.BFMatcher

crossCheck = No

normType = 4

End_Object

Object = Algorithm

Name = DescriptorMatcher.FlannBasedMatcher

End_Object

Object = Algorithm

Name = FaceRecognizer.Eigenfaces

eigenvalues = cv::Mat

eigenvectors = cv::Mat

labels = cv::Mat

mean = cv::Mat

ncomponents = 0

projections = cv::Mat_Vector

threshold = 1.79769313486232e+308

End_Object

Object = Algorithm

Name = FaceRecognizer.Fisherfaces

eigenvalues = cv::Mat

eigenvectors = cv::Mat

labels = cv::Mat

mean = cv::Mat

ncomponents = 0

projections = cv::Mat_Vector

threshold = 1.79769313486232e+308

End_Object

Object = Algorithm

Name = FaceRecognizer.LBPH

grid_x = 8

grid_y = 8

histograms = cv::Mat_Vector

labels = cv::Mat

neighbors = 8

radius = 1

threshold = 1.79769313486232e+308

End_Object

Object = Algorithm

Name = Feature2D.BRIEF

bytes = 32

End_Object

Object = Algorithm

Name = Feature2D.BRISK

octaves = 3

thres = 30

End_Object

Object = Algorithm

Name = Feature2D.Dense

featureScaleLevels = 1

featureScaleMul = 0.10000000149012

initFeatureScale = 1.0

initImgBound = 0

initXyStep = 6

varyImgBoundWithScale = No

varyXyStepWithScale = Yes

End_Object

Object = Algorithm

Name = Feature2D.FAST

nonmaxSuppression = Yes

threshold = 10

End_Object

Object = Algorithm

Name = Feature2D.FASTX

nonmaxSuppression = Yes

threshold = 10

type = 2

End_Object

Object = Algorithm

Name = Feature2D.FREAK

nbOctave = 4

orientationNormalized = Yes

patternScale = 22.0

scaleNormalized = Yes

End_Object

Object = Algorithm

Name = Feature2D.GFTT

k = 0.04

minDistance = 1.0

nfeatures = 1000

qualityLevel = 0.01

useHarrisDetector = No

End_Object

Object = Algorithm

Name = Feature2D.Grid

detector = Null

gridCols = 4

gridRows = 4

maxTotalKeypoints = 1000

End_Object

Object = Algorithm

Name = Feature2D.HARRIS

k = 0.04

minDistance = 1.0

nfeatures = 1000

qualityLevel = 0.01

useHarrisDetector = Yes

End_Object

Object = Algorithm

Name = Feature2D.MSER

areaThreshold = 1.01

delta = 5

edgeBlurSize = 5

maxArea = 14400

maxEvolution = 200

maxVariation = 0.25

minArea = 60

minDiversity = 0.2

minMargin = 0.003

End_Object

Object = Algorithm

Name = Feature2D.ORB

WTA_K = 2

edgeThreshold = 31

firstLevel = 0

nFeatures = 500

nLevels = 8

patchSize = 31

scaleFactor = 1.2000000476837

scoreType = 0

End_Object

Object = Algorithm

Name = Feature2D.SIFT

contrastThreshold = 0.04

edgeThreshold = 10.0

nFeatures = 0

nOctaveLayers = 3

sigma = 1.6

End_Object

Object = Algorithm

Name = Feature2D.STAR

lineThresholdBinarized = 8

lineThresholdProjected = 10

maxSize = 45

responseThreshold = 30

suppressNonmaxSize = 5

End_Object

Object = Algorithm

Name = Feature2D.SURF

extended = No

hessianThreshold = 100.0

nOctaveLayers = 3

nOctaves = 4

upright = No

End_Object

Object = Algorithm

Name = Feature2D.SimpleBlob

blobColor = 0

filterByArea = Yes

filterByCircularity = No

filterByColor = Yes

filterByConvexity = Yes

filterByInertia = Yes

maxArea = 5000.0

maxCircularity = 3.40282346638529e+38

maxConvexity = 3.40282346638529e+38

maxInertiaRatio = 3.40282346638529e+38

maxThreshold = 220.0

minDistBetweenBlobs = 10.0

minRepeatability = 2

minThreshold = 50.0

thresholdStep = 10.0

End_Object

Object = Algorithm

Name = GeneralizedHough.POSITION

dp = 1.0

levels = 360

minDist = 1.0

votesThreshold = 100

End_Object

Object = Algorithm

Name = GeneralizedHough.POSITION_ROTATION

angleStep = 1.0

dp = 1.0

levels = 360

maxAngle = 360.0

minAngle = 0.0

minDist = 1.0

votesThreshold = 100

End_Object

Object = Algorithm

Name = GeneralizedHough.POSITION_SCALE

dp = 1.0

levels = 360

maxScale = 2.0

minDist = 1.0

minScale = 0.5

scaleStep = 0.05

votesThreshold = 100

End_Object

Object = Algorithm

Name = GeneralizedHough.POSITION_SCALE_ROTATION

angleEpsilon = 1.0

angleStep = 1.0

angleThresh = 15000

dp = 1.0

levels = 360

maxAngle = 360.0

maxScale = 2.0

maxSize = 1000

minAngle = 0.0

minDist = 1.0

minScale = 0.5

posThresh = 100

scaleStep = 0.05

scaleThresh = 1000

xi = 90.0

End_Object

Object = Algorithm

Name = StatModel.EM

covMatType = 1

covs = cv::Mat_Vector

epsilon = 1.19209289550781e-07

maxIters = 100

means = cv::Mat

nclusters = 5

weights = cv::Mat

End_Object

Object = Algorithm

Name = SuperResolution.BTVL1

alpha = 0.7

blurKernelSize = 5

blurSigma = 0.0

btvKernelSize = 7

iterations = 180

lambda = 0.03

opticalFlow = DenseOpticalFlowExt.Farneback

scale = 4

tau = 1.3

temporalAreaRadius = 4

End_Object

End_Object

End

|